1

Bug Reports / AMD RDNA2 GPUs compute units

« on: April 06, 2022, 10:39:11 PM »

Alexey,

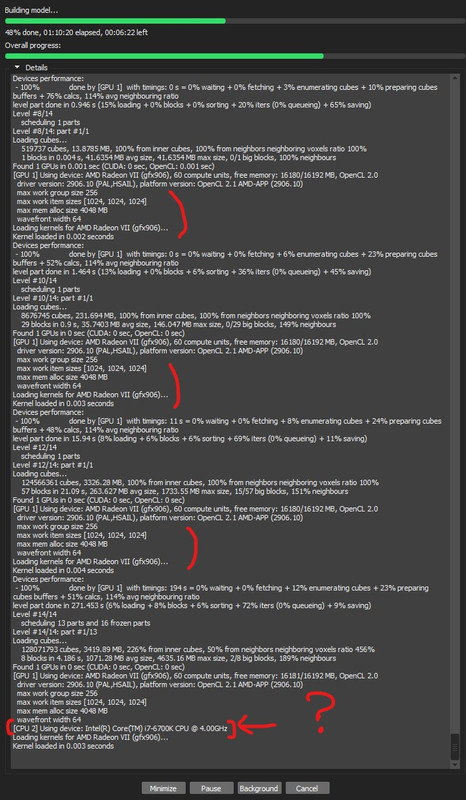

Not sure if this is an actual bug or driver issue..but I noticed that the number of compute units reported/detected by Metashape is half of what's actually supposed to be on AMD RDNA2 GPUs ( a 6700XT in my case).

Metashape reports 20 Compute Units instead of 40.

I'm not even sure if performance is affected at all btw.

Windows 10 with the latest Radeon 22.3.1 WHQL drivers.

Edit: Looks like a driver thing. Every another OpenCL app I tested is reporting the same thing (20 Compute Units).

My Radeon VII (Vega arch GPU) is correctly reported as having 60 Compute Units. Performance doesn't seem to be affected from what I can see (the 6700XT is constantly slightly faster than the Radeon VII)

Mak

Not sure if this is an actual bug or driver issue..but I noticed that the number of compute units reported/detected by Metashape is half of what's actually supposed to be on AMD RDNA2 GPUs ( a 6700XT in my case).

Metashape reports 20 Compute Units instead of 40.

I'm not even sure if performance is affected at all btw.

Windows 10 with the latest Radeon 22.3.1 WHQL drivers.

Edit: Looks like a driver thing. Every another OpenCL app I tested is reporting the same thing (20 Compute Units).

My Radeon VII (Vega arch GPU) is correctly reported as having 60 Compute Units. Performance doesn't seem to be affected from what I can see (the 6700XT is constantly slightly faster than the Radeon VII)

Mak