1

Python and Java API / [Request] Proper Python Documentation

« on: February 14, 2024, 06:34:45 PM »

Dear Agisoft,

Thank you for the powerful Python API.

While the API itself is great, its documentation is not usable.

Finding anything in the PDF document with over 200 pages is super slow and only feasible when you already know the function name.

This issue (along with other vital points) has already been raised in 2022 (https://www.agisoft.com/forum/index.php?topic=14910), but the problem has not been fixed yet.

There are better formats for documentation (e.g. https://numpy.org/doc/stable/reference/index.html), why do we get stuck with a PDF?

PDFs are nice for paper-printing, but that is not how most of us use software documentation.

Furthermore, writing code in any IDE but PyCharm or a notebook is like not using an IDE at all, because code completion, linting and checking do not work.

Again, thanks for the great API and please advise when we can expect its documentation to be at the same level.

Cheers,

Marcel

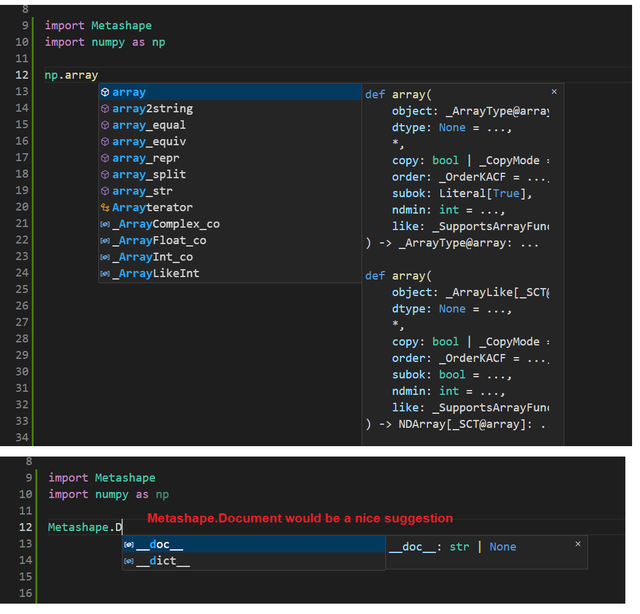

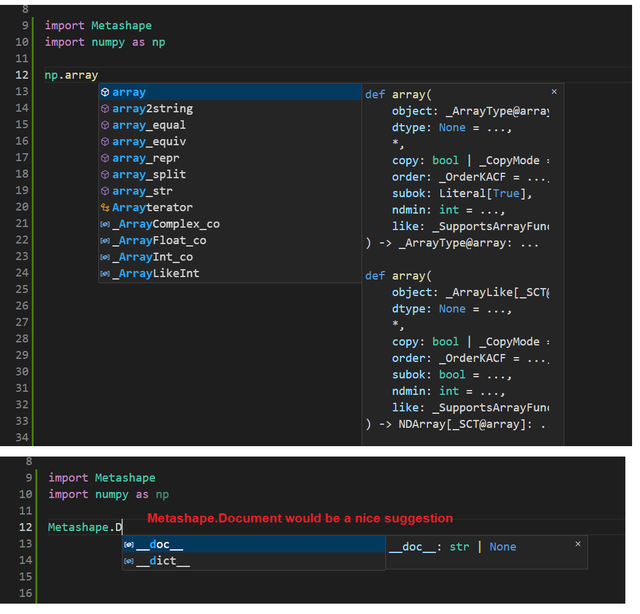

PS Here is what I mean with linting not working in e.g. VSCode:

Thank you for the powerful Python API.

While the API itself is great, its documentation is not usable.

Finding anything in the PDF document with over 200 pages is super slow and only feasible when you already know the function name.

This issue (along with other vital points) has already been raised in 2022 (https://www.agisoft.com/forum/index.php?topic=14910), but the problem has not been fixed yet.

There are better formats for documentation (e.g. https://numpy.org/doc/stable/reference/index.html), why do we get stuck with a PDF?

PDFs are nice for paper-printing, but that is not how most of us use software documentation.

Furthermore, writing code in any IDE but PyCharm or a notebook is like not using an IDE at all, because code completion, linting and checking do not work.

Again, thanks for the great API and please advise when we can expect its documentation to be at the same level.

Cheers,

Marcel

PS Here is what I mean with linting not working in e.g. VSCode: